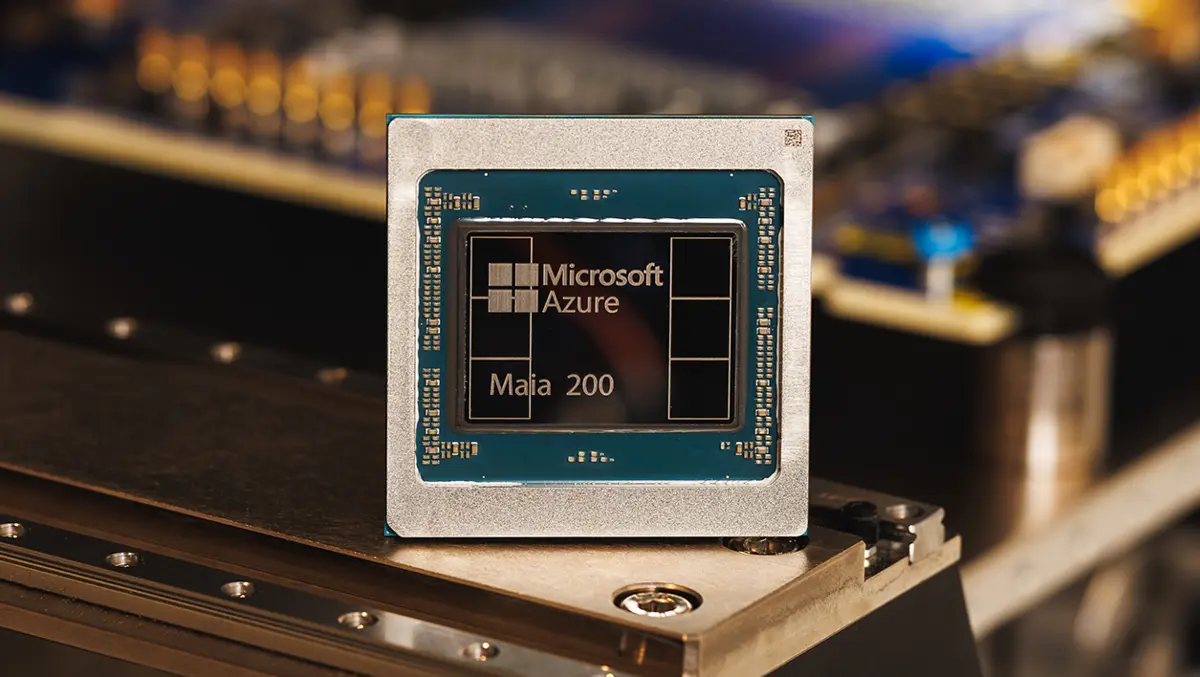

Microsoft has introduced Maia 200, a custom artificial intelligence (AI) accelerator designed for inference workloads in large-scale models. The chip is being deployed in the company's cloud infrastructure and is intended to improve the cost efficiency and performance of serving AI applications.

Design focus

Maia 200 is built on a three-nanometre process from TSMC and incorporates more than 140 billion transistors. The architecture is tailored for low-precision compute operations common in modern AI inference, with dedicated support for 4-bit (FP4) and 8-bit (FP8) tensor arithmetic.

The accelerator integrates 216 gigabytes of high-bandwidth HBM3e memory capable of roughly 7 terabytes per second of throughput, alongside 272 megabytes of on-chip SRAM. This memory configuration is aimed at reducing data movement bottlenecks that can limit real-world performance for large models.

Each Maia 200 chip operates within a 750-watt thermal design envelope and delivers more than 10 petaFLOPS of FP4 performance and over 5 petaFLOPS of FP8 performance. According to Microsoft, this balance of compute and memory resources targets production-scale AI workloads efficiently.

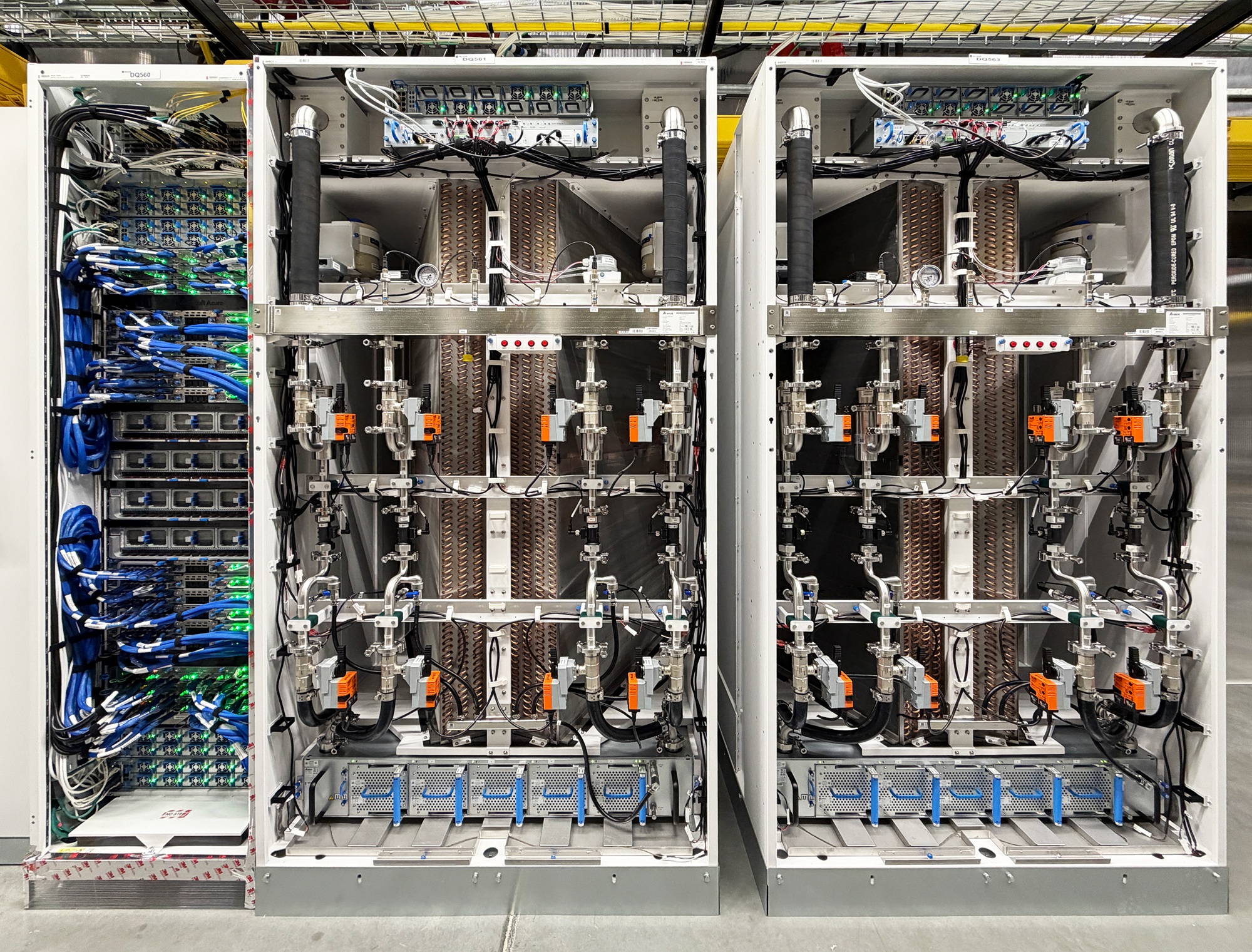

Cluster scaling

At the systems level, Microsoft has paired Maia 200 with a two-tier scale-up network design based on standard Ethernet. A custom transport layer and integrated network interface support collective operations across clusters of up to 6,144 accelerators. Each unit provides about 2.8 terabytes per second of dedicated bidirectional bandwidth for scale-up communication.

Within individual trays, four Maia accelerators are directly linked without switched connections. This arrangement is intended to keep high-bandwidth communication local and improve scalability across racks.

Usage and deployment

Microsoft reports that Maia 200 is already operational in its U.S. Central data centre near Des Moines, Iowa, with additional regions, such as U.S. West 3 in Phoenix, planned for rollout. The accelerator forms part of the company's heterogeneous AI infrastructure, supporting multiple models and services within its cloud offerings.

The company says Maia 200 is deployed to accelerate services, including Microsoft Foundry, Microsoft 365 Copilot, and OpenAI's GPT-5.2 model. Internal teams are also using the accelerator for tasks such as synthetic data generation and reinforcement learning.

Ecosystem tools

Microsoft is making available a software development kit (SDK) preview for Maia 200. The toolkit includes integration with popular frameworks like PyTorch, support for the Triton compiler, optimised kernel libraries and a low-level programming language. The SDK is designed to help developers optimise models and workloads for the chip.

Performance claims

The company states that Maia 200 offers about 30 per cent better performance per dollar for inference compared with the latest hardware in its fleet. Microsoft also positions the accelerator's FP4 performance as roughly three times that of Amazon's third-generation Trainium processor, and claims the FP8 performance exceeds that of Google's seventh-generation TPU.

"Maia 200 is engineered to excel at narrow-precision compute while keeping large models fed, fast, and highly utilised," said Scott Guthrie, Executive Vice President, Cloud + AI, Microsoft.